Cesar Espinoza and Veronica Dominguez at Rosen USA break down the essentials for ensuring smooth and effective data management and integrity.

Data management refers to the process of collecting, organising and storing large amounts of data in such a way that it can be useful and easy to access, navigate, and analyse.

Facilities owners and operators now have more access to mechanical integrity data than ever before. As technology continues to advance, data will become increasingly accessible.

On the other hand, the challenges of working with large volumes of data, and the need for automated systems to manage it, will become more prevalent.

The ultimate goal of an integrity management programme is ensuring and maintaining safe and reliable operations. Effectively generating risk profiles at different hierarchy levels (plant, unit, equipment and circuit levels) helps to determine what risks exist and where they are. Support can then be provided to the owner to mitigate this.

When organisations can effectively do this, they achieve greater production uptime, more efficient operations, and more reliable predictions.

Types of data management

There are two levels of data management in a typical processing facility. The first uses simple models to pull relevant and validated data from the established sources to visualise correlations and trends. This is done via filtering and generating charts. This is a level of data management that is used by integrity management personnel on a daily basis for routine tasks. Examples would include: wall thickness data; operating temperatures and pressures; sample chemical analysis results (such as pH, water cut, and chlorides content) and; corrosion rates.

The next level uses more complex analytical models and generally requires data scientists and machine learning software. It typically requires specialised third-party involvement, aiming for a more predictive and forward-looking strategy development.

Without a clear and proper strategy for data management, facility owners will become tied to the use of segmented and qualitative approaches for managing asset integrity. This translates in subjectivity and variability in how reliability programmes are managed; decisions will not be data-driven and therefore, not consistently applied.

The world of possibilities enabled by proficient data management becomes evident within every aspect of facility operations. Data management accomplished through a well-suited integrity management application (IMA) and mobile data reporting solutions can result in significant time and cost savings. These streamlined capabilities allow faster inspection activities, while the collected data becomes available in real-time. Data management can also aid post-inspection work by making it more informed and relevant.

Challenges of data management

At any facility, there are normally several systems simultaneously collecting and processing good data. These systems and data sets are often siloed, since they don’t effectively communicate with each other. There may be information nuggets that could greatly support asset management, but that can only become evident once the data is integrated.

One way to overcome this challenge is to integrate the data siloes from different departments (inspection, maintenance, operations, etc), into a centralised data repository. Then, apply predictive analytics to obtain useful outputs for inspectors, engineers, and maintenance personnel.

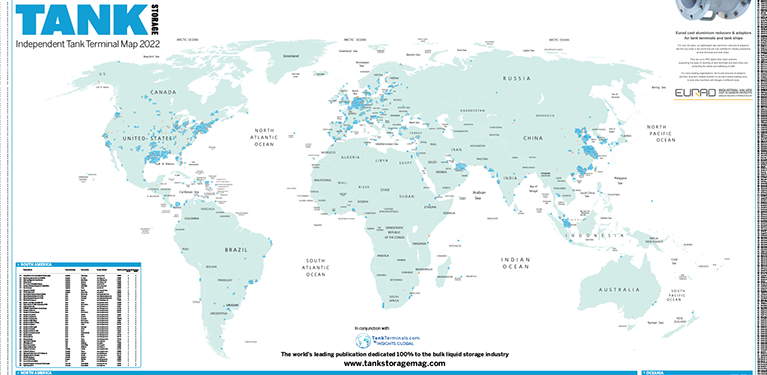

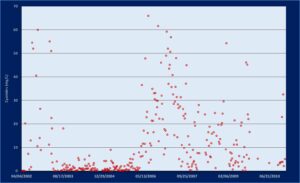

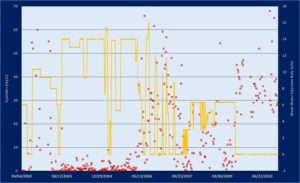

An example of the importance of correlating the data is illustrated with the charts opposite. Two sets of data belonging to different data bases were plotted together with the aim of determining correlations and patterns that could lead to a better understanding of a series of failures associated to aqueous sour water corrosion and hydrogen induced cracking in a gas recovery unit (GRU) in a typical refinery.

Both corrosion mechanisms are widely related to the H2S presence in aqueous phase (Wet H2S Damage) and a high content of cyanides and ammonia. In the first chart (Figure 01), process engineers plotted cyanides content in the water (vertical axis in mg/L) on one of the pressure vessels boots outlet over time. This data is captured from water samples taken from several sample points and then analysed in the refinery laboratory. The trend revealed cyanides were under control for a certain period of time and then became erratic, exceeding the design thresholds.

By plotting the lab analysis of the water samples against other data sets, it became evident the influence of the lack of wash water injection in the significant increase of free atomic hydrogen that is available to permeate through the steel and cause hydrogen damage (Figure 02). The wash water injection data (right vertical axis in tons per hour) was captured by the plant operations monitoring software.

By leveraging failure history and maintenance costs from the computerised maintenance management system (CMMS), it becomes possible to determine the cost and losses associated to this operational constraint. All this study provided the refinery owners with clear decision- making criteria to solve the problem.

Clear procedures for large data sets

Managing and organising large volumes of data can be complex, costly and time consuming. Facility owners are continuously collecting large volumes of data points, very often unknowingly of whether they will be able to make use of it (or even how), and potentially, with management systems not mature enough to fully leverage this data.

To overcome this challenge, a robust data management system is paramount. These systems need to handle the complex relationships between different types of data or data sets, and need to provide users with the ability to easily find and reproduce the desired information. This can require sophisticated systems that can support the needs of different users across the organisation, and can provide the right level of access and controls.

To ensure good data can be leveraged by all interested departments, it is important to have clear procedures in place for all aspects of the data collection protocols.

This includes training and supervision of the personnel involved in the data collection, the use of standardised measurement instruments, and the procedures for verifying the accuracy of the data. This can ensure the data is properly collected and incorporated into a data management system.

Data integration

The future of asset integrity within refineries and plants lies within the benefits of an integrated data highway. This is because data can come from various incompatible sources, such as multiple types of non-destructive testing (NDT) tools and online monitoring systems that have been collecting data for years with different levels of completeness and complexity.

For integrating these distinct sources, a comprehensive IMA solution specialised in risk assessment, management of inspection and corrosion data management, and with powerful visualisation capabilities is fundamental.

An IMA should be able to store, analyse and generate actionable insights from the data. An IMA with the proper analytic tools can manage multiple assets across multiple facilities in one platform. This increases transparency in the inspection process and helps operators meet compliance requirements. Both owners and operators can simplify the process of integrating data from multiple inputs, by partnering with integrity management consultants specialising in a wide variety of solutions. Rather than retroactively integrating incompatible data from multiple sources (such as data from drones, inline inspections (ILI), NDT examinations, and online monitoring systems), relying on a single consultant to execute all of these activities helps ensure that the data collected, can be easily and effectively stored and analysed.

Advanced mobile inspection data reporting platforms have developed into mobile cloud-based field inspection, execution, and reporting solutions.

Through the use of handheld devices in the field, mobile inspection data reporting platforms allow technicians to develop reports directly on their device. This further enables them to send information dynamically to an IMA, thus removing the time and manpower inefficiencies present when manually entering data.

For more information:

Don’t miss the Rosen Group’s case study into how a successful software implementation rendered great dividends. Online now!

www.tankstorage.com/partner-news/rosen-group-data-casee-study